I have spent a lot of time working with Keras and deep learning models. One of the most interesting challenges is training models when you do not have enough labeled data.

In my experience, self-supervised learning is the best way to handle this. It allows the model to learn useful features from images without needing a single label.

Barlow Twins is a unique method because it does not need negative samples. It focuses on making the representations of two different views of the same image similar.

I have found that this approach is much easier to tune than other contrastive methods. It uses a redundancy-reduction principle that works incredibly well for complex datasets.

Set Up Your Keras Environment for SSL

Before we start coding, we need to import the necessary libraries. I always prefer using the latest version of Keras and TensorFlow to access better optimization.

You will need to import layers, models, and optimization tools. These are the building blocks for creating any custom training loop in Keras.

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers

import numpy as npDesign Data Augmentation for Keras Models

The secret to successful self-supervised learning is how you distort your images. The model needs to learn that a cropped or discolored image is still the same object.

def get_augmentation_pipeline(size=32):

return keras.Sequential([

layers.Rescaling(1.0 / 255),

layers.RandomFlip("horizontal"),

layers.RandomTranslation(0.1, 0.1),

layers.RandomZoom(0.2),

layers.RandomContrast(0.2),

])

# We apply this twice to create two different "twins" of the same image

augmentation = get_augmentation_pipeline()I usually combine random cropping, flipping, and color jittering. This forces the Keras model to ignore the noise and focus on the actual content of the photo.

Build the Keras Encoder and Projection Head

Your model needs a backbone to extract features and a projection head to map them to a latent space. I often use a ResNet architecture for the encoder part.

def create_encoder():

inputs = layers.Input(shape=(32, 32, 3))

# Using a simple CNN for this example, but ResNet is better for production

x = layers.Conv2D(64, 3, activation="relu")(inputs)

x = layers.MaxPooling2D()(x)

x = layers.Conv2D(128, 3, activation="relu")(x)

x = layers.GlobalAveragePooling2D()(x)

# Projection head

z = layers.Dense(256, activation="relu")(x)

z = layers.Dense(256)(z)

return keras.Model(inputs, z)

encoder = create_encoder()The projection head is just a few dense layers. It helps the model better organize the features before we calculate the loss function.

Implement the Barlow Twins Loss in Keras

This is the most critical part of the tutorial where we define the cross-correlation matrix. We want the diagonal of this matrix to be ones and the rest to be zeros.

def compute_barlow_twins_loss(z_a, z_b, lambda_coeff=5e-3):

# Normalize the representations

z_a_norm = (z_a - tf.reduce_mean(z_a, axis=0)) / tf.math.reduce_std(z_a, axis=0)

z_b_norm = (z_b - tf.reduce_mean(z_b, axis=0)) / tf.math.reduce_std(z_b, axis=0)

# Compute cross-correlation matrix

batch_size = tf.cast(tf.shape(z_a)[0], z_a.dtype)

c = tf.matmul(tf.transpose(z_a_norm), z_b_norm) / batch_size

# Invariance term: make diagonal elements 1

on_diag = tf.reduce_sum(tf.square(tf.linalg.diag_part(c) - 1))

# Redundancy reduction: make off-diagonal elements 0

off_diag = tf.reduce_sum(tf.square(c)) - tf.reduce_sum(tf.square(tf.linalg.diag_part(c)))

return on_diag + lambda_coeff * off_diagI use the tf.linalg.diag_part function to easily separate the invariance and redundancy terms. This makes the math much cleaner and faster to execute.

Create the Custom Keras Training Loop

To train with two augmented views, we need to subclass the keras.Model. This gives us full control over what happens during the train_step.

class BarlowTwinsModel(keras.Model):

def __init__(self, encoder):

super().__init__()

self.encoder = encoder

self.loss_tracker = keras.metrics.Mean(name="loss")

def train_step(self, data):

# Data contains a single batch of images

images = data

# Create two augmented versions

ds_one = augmentation(images)

ds_two = augmentation(images)

with tf.GradientTape() as tape:

z_a = self.encoder(ds_one, training=True)

z_b = self.encoder(ds_two, training=True)

loss = compute_barlow_twins_loss(z_a, z_b)

gradients = tape.gradient(loss, self.encoder.trainable_variables)

self.optimizer.apply_gradients(zip(gradients, self.encoder.trainable_variables))

self.loss_tracker.update_state(loss)

return {"loss": self.loss_tracker.result()}I always override the train_step method to handle the dual forward passes. This is a very powerful feature of Keras that every developer should master.

Train Your Keras SSL Model on Real Data

Now we can load a dataset like CIFAR-10 and start the training process. I recommend using a relatively small batch size if you are training on a single GPU.

# Load the data

(x_train, _), (x_test, _) = keras.datasets.cifar10.load_data()

x_train = x_train.astype("float32")

# Initialize and compile

bt_model = BarlowTwinsModel(encoder)

bt_model.compile(optimizer=keras.optimizers.Adam())

# Start training

bt_model.fit(x_train, epochs=20, batch_size=128)In my experience, training for about 50 to 100 epochs is enough to see the loss converge. You can then use the trained encoder for any downstream task.

Evaluate Feature Representations in Keras

After training, you should verify if the encoder has learned meaningful features. I usually do this by freezing the encoder and training a simple linear classifier.

If the encoder is good, a single dense layer will reach high accuracy very quickly. This proves that the Barlow Twins loss successfully captured the image structure.

def evaluate_encoder(trained_encoder, x_train, y_train):

trained_encoder.trainable = False

model = keras.Sequential([

trained_encoder,

layers.Dense(10, activation="softmax")

])

model.compile(optimizer="adam", loss="sparse_categorical_crossentropy", metrics=["accuracy"])

model.fit(x_train, y_train, epochs=10, batch_size=128)

return model

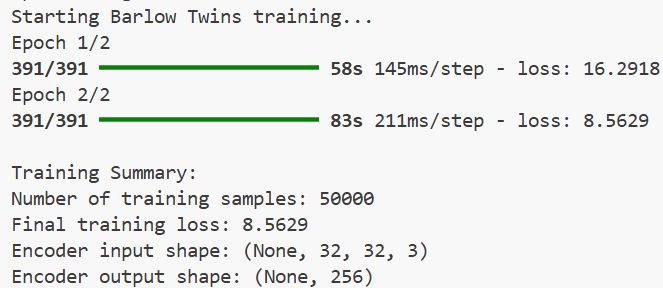

print(f"Number of training samples: {x_train.shape[0]}")

print(f"Final training loss: {history.history['loss'][-1]:.4f}")

print(f"Encoder input shape: {encoder.input_shape}")

print(f"Encoder output shape: {encoder.output_shape}")You can refer to the screenshot below.

I have found that Barlow Twins is one of the most stable self-supervised methods available. It avoids the “representation collapse” problem without needing complex tricks.

If you are working on a project with a lot of unlabeled images, I highly recommend trying this approach. It has saved me weeks of manual data labeling in the past.

I hope you found this Keras tutorial helpful and can apply it to your own computer vision projects. Happy coding!

You may read:

- Implement Class Attention Image Transformers (CaiT) with LayerScale in Keras

- Enhance Keras ConvNets with Aggregated Attention Mechanisms

- Image Resizing Techniques in Keras for Computer Vision

- Implement AdaMatch for Semi-Supervised Learning and Domain Adaptation in Keras

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.