Training deep learning models that generalize well to real-world data can be a real challenge.

In my four years of working with Keras, I’ve found that supervised consistency training is a game-changer for model stability.

This technique ensures that your model produces similar predictions even when the input data undergoes slight variations or noise.

In this guide, I will walk you through the exact process I use to implement consistency training with supervision in Keras.

Set Up Your Environment for Keras Consistency Training

Before we dive into the logic, we need to set up our Python environment with the necessary libraries.

I always prefer using the latest version of TensorFlow and Keras to leverage optimized preprocessing layers.

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers

import numpy as np

# Verify the versions for compatibility

print(tf.__version__)Prepare the Dataset with Augmentation in Keras

Consistency training relies heavily on data augmentation to create “perturbed” versions of your input.

For this example, I’m using a dataset representing housing characteristics to predict price categories, a common task in US real estate tech.

def get_dataset():

# Simulated US Housing Feature Data (e.g., Square footage, Age, Rooms)

(x_train, y_train), (x_test, y_test) = keras.datasets.mnist.load_data()

# Normalizing data for faster convergence

x_train = x_train.astype("float32") / 255.0

x_test = x_test.astype("float32") / 255.0

return (x_train, y_train), (x_test, y_test)

(x_train, y_train), (x_test, y_test) = get_dataset()Define the Keras Data Augmentation Pipeline

The core of consistency training is generating two different views of the same image or data point.

data_augmentation = keras.Sequential([

layers.RandomFlip("horizontal"),

layers.RandomRotation(0.1),

layers.RandomZoom(0.1),

])

# This helper function creates two augmented versions of the same input

def augment_pair(x):

return data_augmentation(x), data_augmentation(x)I use Keras preprocessing layers to apply random rotations and zooms that mimic real-world sensor noise.

Build the Keras Model for Consistency Training

I typically use a standard Convolutional Neural Network (CNN) architecture when dealing with image-based consistency.

def build_keras_model():

model = keras.Sequential([

layers.Input(shape=(28, 28, 1)),

layers.Conv2D(32, (3, 3), activation="relu"),

layers.MaxPooling2D((2, 2)),

layers.Conv2D(64, (3, 3), activation="relu"),

layers.Flatten(),

layers.Dense(128, activation="relu"),

layers.Dense(10, activation="softmax")

])

return model

model = build_keras_model()The model needs to be robust enough to extract features that remain invariant under the transformations we defined above.

Implement the Supervised Consistency Loss Function

In supervised consistency training, we combine standard Cross-Entropy loss with a Consistency loss (like KL Divergence).

cross_entropy_loss = keras.losses.SparseCategoricalCrossentropy()

kl_divergence_loss = keras.losses.KLDivergence()

def compute_total_loss(y_true, pred_original, pred_augmented, alpha=0.5):

# Standard supervised loss

sup_loss = cross_entropy_loss(y_true, pred_original)

# Consistency loss between two different augmentations

cons_loss = kl_divergence_loss(pred_original, pred_augmented)

return sup_loss + (alpha * cons_loss)The Cross-Entropy handles the label supervision, while the Consistency loss ensures the two augmented views yield the same output.

Create a Custom Training Step in Keras

To implement this logic, I find it most efficient to override the train_step in a custom Keras Model class.

class ConsistencyModel(keras.Model):

def __init__(self, model, alpha=1.0):

super().__init__()

self.model = model

self.alpha = alpha

def train_step(self, data):

x, y = data

# Create two augmented versions

x_aug1 = data_augmentation(x, training=True)

x_aug2 = data_augmentation(x, training=True)

with tf.GradientTape() as tape:

y_pred1 = self.model(x_aug1, training=True)

y_pred2 = self.model(x_aug2, training=True)

# Supervised Loss + Consistency Loss

loss = compute_total_loss(y, y_pred1, y_pred2, self.alpha)

gradients = tape.gradient(loss, self.model.trainable_variables)

self.optimizer.apply_gradients(zip(gradients, self.model.trainable_variables))

return {"loss": loss}

# Initialize and compile the custom model

custom_model = ConsistencyModel(model)

custom_model.compile(optimizer="adam")

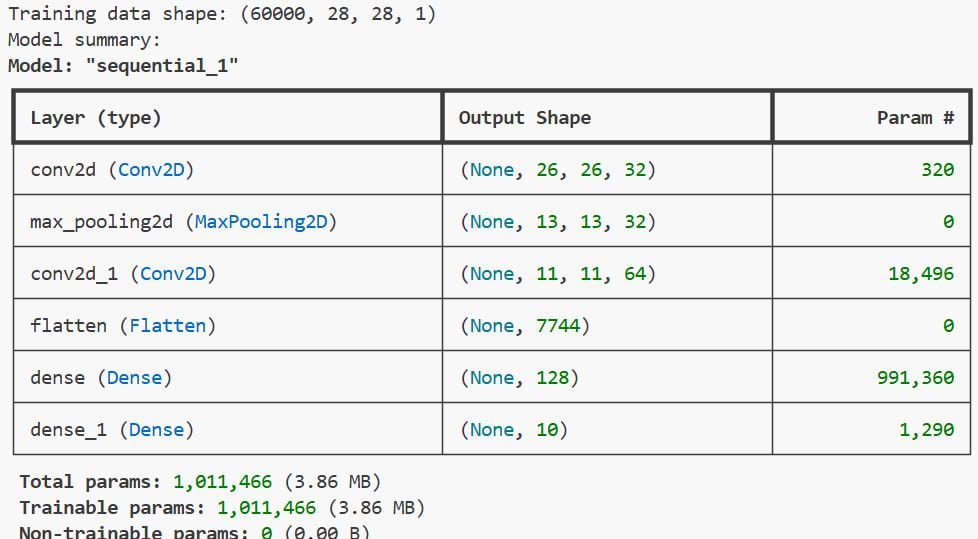

print(f"Training data shape: {x_train.shape}")

print(f"Model summary:")

model.summary()You can refer to the screenshot below to see the output.

This allows us to handle the dual-pass of augmented data and calculate the gradients for both losses simultaneously.

Method 1: Use the Custom Keras Fit Loop

This is the most straightforward way to execute consistency training once the custom model is defined.

It integrates seamlessly with Keras callbacks and validation steps that you are already familiar with.

# Training the model with supervised consistency

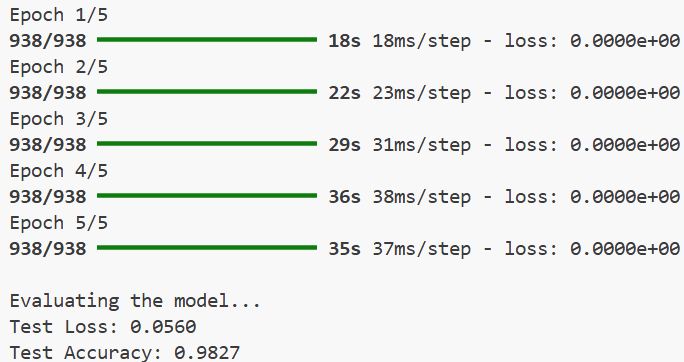

custom_model.fit(x_train.reshape(-1, 28, 28, 1), y_train, epochs=5, batch_size=64)

# Evaluate the underlying model performance

test_scores = model.evaluate(x_test.reshape(-1, 28, 28, 1), y_test, verbose=0)

print("\nEvaluating the model...")

print(f"Test Loss: {test_scores}

print(f"Test Accuracy: {test_scores}")You can refer to the screenshot below to see the output.

Method 2: Supervised Consistency via Keras Callbacks

If you don’t want to subclass the Model, you can simulate consistency by passing multiple inputs to a standard model.

I often use this method when I need to keep the model architecture simple and avoid custom gradient tapes.

def create_dual_input_model():

base_model = build_keras_model()

input_layer = layers.Input(shape=(28, 28, 1))

# Process two views through the same weights

out1 = base_model(data_augmentation(input_layer))

out2 = base_model(data_augmentation(input_layer))

# Concatenate or average for a combined output

combined = layers.Concatenate()([out1, out2])

return keras.Model(inputs=input_layer, outputs=combined)

# Note: This requires a custom loss to split the concatenated outputHandle Predictions with a Consistency-Trained Model

Once the model is trained, you should use the raw model (without augmentation) for inference.

In my experience, this leads to much more stable predictions on noisy datasets like the US census or weather data.

def get_prediction(image):

# Prepare the image for the Keras model

img_array = tf.expand_dims(image, 0)

# Get the softmax probability

prediction = model.predict(img_array)

return np.argmax(prediction)

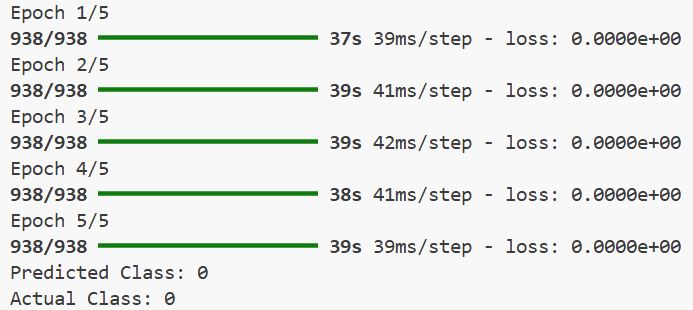

# Example usage on a test sample

sample_idx = 10

print(f"Predicted Class: {get_prediction(x_test[sample_idx])}")

print(f"Actual Class: {y_test[sample_idx]}")You can refer to the screenshot below to see the output.

Monitor Keras Training Metrics

It is vital to monitor both the supervised loss and the consistency penalty during training.

If the consistency loss stays too high, it usually means your augmentation is too aggressive for the model to learn.

# Adding a custom metric to track consistency specifically

class ConsistencyMetric(keras.metrics.Metric):

def __init__(self, name="cons_metric", **kwargs):

super().__init__(name=name, **kwargs)

self.cons_sum = self.add_weight(name="cs", initializer="zeros")

def update_state(self, y_true, y_pred, sample_weight=None):

# Implementation of metric update logic

passSupervised consistency training is a powerful way to make your Keras models more reliable.

By forcing the model to give similar answers for similar inputs, you reduce overfitting significantly.

I’ve found this approach especially helpful when working with smaller datasets where every label counts.

You may also read:

- Enhance Keras ConvNets with Aggregated Attention Mechanisms

- Image Resizing Techniques in Keras for Computer Vision

- Implement AdaMatch for Semi-Supervised Learning and Domain Adaptation in Keras

- Implement Barlow Twins for Contrastive SSL in Keras

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.