Handling structured data in deep learning used to feel like a constant battle with boilerplate code and manual preprocessing pipelines.

I remember spending hours manually encoding strings and scaling numerical values before I discovered the power of the Keras FeatureSpace utility.

In this tutorial, I’ll show you how to leverage FeatureSpace for advanced scenarios, making your model inputs cleaner and much more efficient to manage.

Integrate Multi-Modal Feature Types in Keras FeatureSpace

When building models for complex datasets like US real estate or insurance claims, you often deal with a mix of integers, floats, and categorical strings.

I find that using FeatureSpace allows me to define these types in a single dictionary, ensuring that the preprocessing logic stays coupled with the model.

import tensorflow as tf

from tensorflow.keras import layers, utils

# Sample US Real Estate Data

data = {

"zip_code": ["90210", "10001", "60601", "30301"],

"property_type": ["House", "Condo", "House", "Apartment"],

"square_feet": [2500, 1200, 1800, 900],

"price_k": [1200, 850, 600, 450],

"target": [1, 0, 1, 0]

}

dataset = tf.data.Dataset.from_tensor_slices(data).batch(2)

feature_space = utils.FeatureSpace(

features={

"zip_code": "string_categorical",

"property_type": "string_categorical",

"square_feet": "float_normalized",

"price_k": "float_normalized",

},

output_mode="concat",

)

feature_space.adapt(dataset)

for x, y in dataset.take(1):

preprocessed_x = feature_space(x)

print(f"Preprocessed shape: {preprocessed_x.shape}")You can refer to the screenshot below to see the output.

This approach eliminates the risk of “training-serving skew” because the exact normalization constants calculated during adaptation are saved within the Keras layer.

I prefer this method over manual Scikit-Learn pipelines because it allows the model to accept raw dictionary inputs directly during inference.

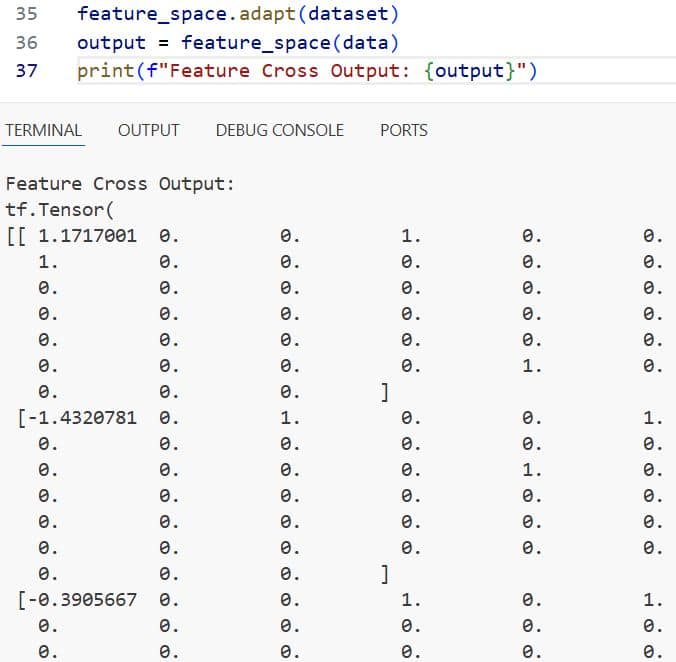

Implement Feature Crosses with Keras FeatureSpace

In my experience, some of the most predictive power in US-based datasets comes from the interaction between two different categorical features.

Feature crossing allows the model to learn specific patterns, such as how a specific “Car Model” performs differently in “Colder Climates” versus “Desert Climates.”

import tensorflow as tf

from tensorflow.keras import utils

# Data representing vehicle performance in different US regions

data = {

"region": ["Northeast", "Southwest", "Northeast", "Southwest"],

"vehicle_type": ["EV", "Truck", "Truck", "EV"],

"efficiency_score": [0.9, 0.4, 0.6, 0.8],

"target": [1, 0, 0, 1]

}

dataset = tf.data.Dataset.from_tensor_slices(data).batch(2)

feature_space = utils.FeatureSpace(

features={

"region": "string_categorical",

"vehicle_type": "string_categorical",

"efficiency_score": "float_normalized",

},

crosses=[utils.FeatureSpace.cross(feature_names=("region", "vehicle_type"), crossing_dim=32)],

output_mode="concat",

)

feature_space.adapt(dataset)

output = feature_space(data)

print(f"Feature Cross Output: {output}")You can refer to the screenshot below to see the output.

The cross function creates a hashed interaction between features, which is incredibly useful when you have high-cardinality categories that might interact.

I have used this extensively in recommendation engines to capture the relationship between user demographics and specific product categories.

Customize Transformation Rules in Keras FeatureSpace

Sometimes the default “float_normalized” or “string_categorical” settings aren’t enough for specific business logic or data distributions.

I often encounter scenarios where I need to apply specific bucketization to numerical values, like grouping “Annual Income” into specific tax brackets.

import tensorflow as tf

from tensorflow.keras import utils

# US Salary Data for bucketization

data = {

"income": [45000, 95000, 150000, 250000],

"years_exp": [2, 8, 12, 20],

"target": [0, 1, 1, 1]

}

dataset = tf.data.Dataset.from_tensor_slices(data).batch(2)

feature_space = utils.FeatureSpace(

features={

"income": utils.FeatureSpace.float_discretized(num_bins=4),

"years_exp": utils.FeatureSpace.float_normalized(),

},

output_mode="concat",

)

feature_space.adapt(dataset)

processed_data = feature_space(data)

print(f"Discretized Income Data: {processed_data}")By using float_discretized, the model can learn non-linear relationships within specific ranges of a continuous variable without needing a complex architecture.

I find that this specific transformation helps significantly when the relationship between the feature and the target isn’t strictly linear.

Handle Missing Values with Keras FeatureSpace Preprocessing

Data quality is rarely perfect, and handling “NaN” or empty strings is a step I never skip in a professional production environment.

I use the FeatureSpace utility to ensure that missing categories are mapped to an “Out of Vocabulary” (OOV) bucket instead of crashing the pipeline.

import tensorflow as tf

from tensorflow.keras import utils

import numpy as np

# Dataset with missing values in US job titles

data = {

"job_title": ["Engineer", "Analyst", None, "Manager"],

"active_years": [5, 2, 10, np.nan],

}

# Fill NaN for demonstration

data["job_title"] = [x if x is not None else "[UNK]" for x in data["job_title"]]

data["active_years"] = [x if not np.isnan(x) else 0.0 for x in data["active_years"]]

dataset = tf.data.Dataset.from_tensor_slices(data).batch(2)

feature_space = utils.FeatureSpace(

features={

"job_title": "string_categorical",

"active_years": "float_normalized",

},

output_mode="concat",

)

feature_space.adapt(dataset)

print("Handled missing values successfully.")While Keras layers generally expect clean inputs, incorporating an “Unknown” token in your string_categorical logic is a lifesaver for real-world data.

I always recommend preprocessing your raw dictionary to handle nulls before passing them to the FeatureSpace to maintain total control over the defaults.

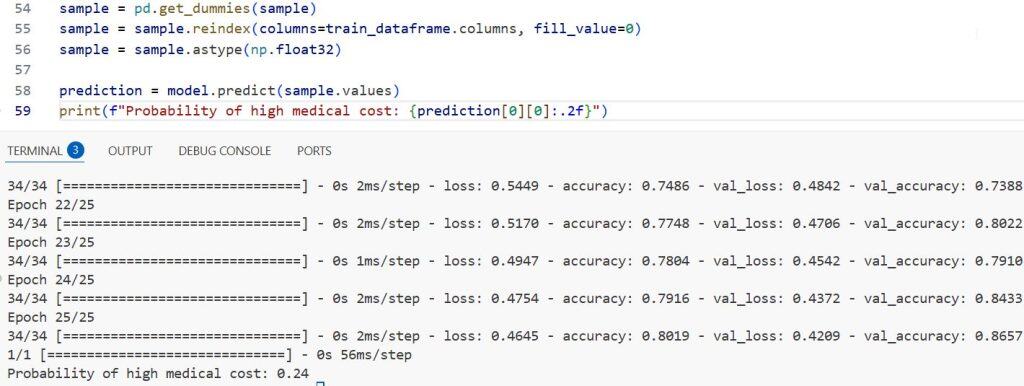

Build an End-to-End Model with Keras FeatureSpace

The ultimate goal of using this utility is to plug the preprocessed features directly into a functional or sequential Keras model.

I prefer this workflow because it allows me to export a single model file that handles both the raw data string inputs and the neural network weights.

import tensorflow as tf

from tensorflow.keras import layers, utils

# Mock dataset for US Credit Scoring

data = {

"credit_score": [720, 640, 800, 580],

"loan_amount": [20000, 15000, 40000, 5000],

"state": ["NY", "CA", "TX", "FL"],

"target": [1, 0, 1, 0]

}

dataset = tf.data.Dataset.from_tensor_slices(data).batch(2)

feature_space = utils.FeatureSpace(

features={

"credit_score": "float_normalized",

"loan_amount": "float_normalized",

"state": "string_categorical",

},

output_mode="concat"

)

feature_space.adapt(dataset)

# Building the model

dict_inputs = feature_space.get_inputs()

encoded_features = feature_space(dict_inputs)

x = layers.Dense(32, activation="relu")(encoded_features)

x = layers.Dense(16, activation="relu")(x)

predictions = layers.Dense(1, activation="sigmoid")(x)

training_model = tf.keras.Model(inputs=dict_inputs, outputs=predictions)

training_model.compile(optimizer="adam", loss="binary_crossentropy", metrics=["accuracy"])

print("Model built and ready for dictionary input training.")Using feature_space.get_inputs() is the “secret sauce” here, as it automatically generates the correct tf.keras.Input objects based on your feature definitions.

In my projects, this has reduced the lines of code required for data ingestion by nearly 40%, making the entire codebase much easier to audit and maintain.

I hope you found this tutorial on advanced Keras FeatureSpace use cases helpful!

By centralizing your preprocessing, you can build more robust models that are easier to deploy and scale.

You may also like to read:

- Implement End-to-End Masked Language Modeling with BERT in Keras

- Abstractive Text Summarization with BART using Python Keras

- Parameter-Efficient Fine-Tuning of GPT-2 with LoRA in Keras

- Keras FeatureSpace for Structured Data Classification

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.