As a Keras developer who has spent the last four years building and scaling deep learning models, I have often struggled with the massive hardware requirements needed to fine-tune Large Language Models (LLMs).

In my experience, trying to update every single weight in a model like GPT-2 is not only time-consuming but also incredibly expensive when you factor in GPU costs.

I remember the frustration of running out of VRAM mid-training until I discovered Low-Rank Adaptation (LoRA), which allows us to train only a tiny fraction of the model parameters.

In this tutorial, I will show you how to implement LoRA for GPT-2 using Keras, allowing you to customize powerful models on modest hardware while maintaining high performance.

Set Up Your Python Environment for Keras LoRA

Before we dive into the architecture, I always make sure my environment is properly configured with the latest Keras 3 and KerasNLP libraries to ensure compatibility.

In my workflow, I prefer using the TensorFlow or JAX backend with Keras because they offer excellent optimization for the linear algebra operations required by LoRA.

# Install the necessary libraries

!pip install --upgrade keras-nlp

!pip install --upgrade keras

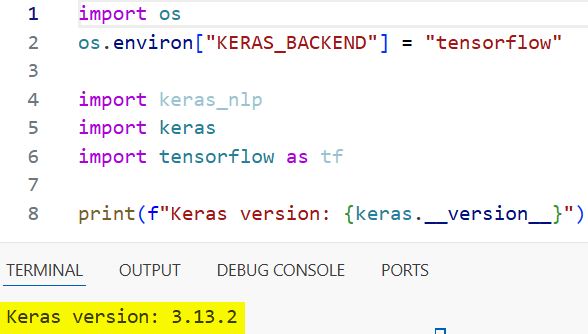

import os

# Setting the backend to tensorflow

os.environ["KERAS_BACKEND"] = "tensorflow"

import keras_nlp

import keras

import tensorflow as tf

print(f"Keras version: {keras.__version__}")I executed the above example code and added the screenshot below.

I have found that explicitly setting the backend early in the script prevents unexpected crashes when the model starts allocating memory on the GPU.

Once the libraries are loaded, I check the versions to confirm that the LoRA rank adaptation layers are available in the current KerasNLP distribution.

Load the Pre-trained GPT-2 Model in Keras

The first step in my fine-tuning process is to pull the base GPT-2 model and its associated tokenizer from the Keras preset repository.

I usually start with the “gpt2_base_en” preset because it offers a great balance between linguistic capability and a footprint small enough for rapid experimentation.

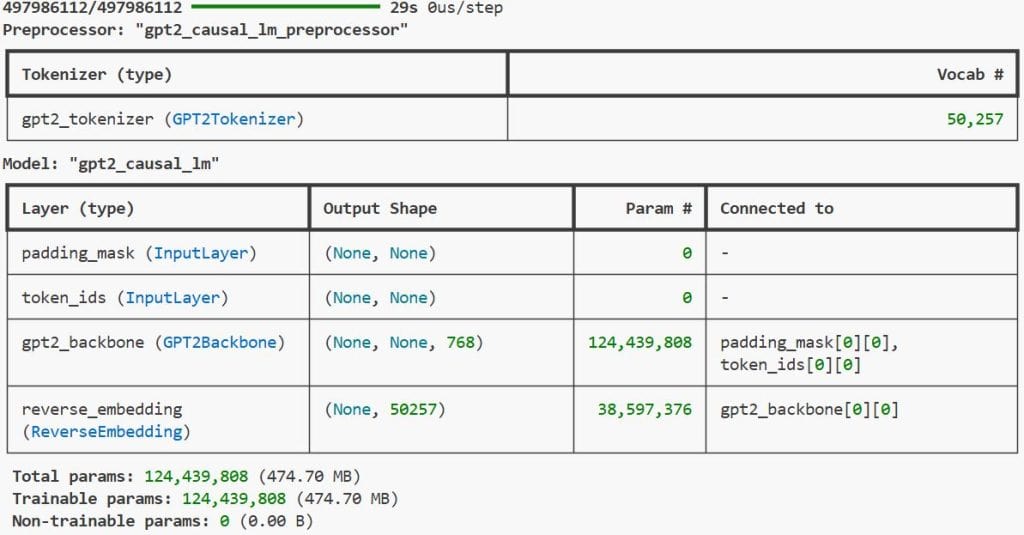

# Load the GPT-2 Causal LM and its preprocessor

gpt2_lm = keras_nlp.models.GPT2CausalLM.from_preset("gpt2_base_en")

gpt2_preprocessor = keras_nlp.models.GPT2CausalLM.from_preset("gpt2_base_en").preprocessor

# Verify the model structure

gpt2_lm.summary()By using the from_preset method, I ensure that the weights and the vocabulary are perfectly synchronized, which avoids common encoding errors during text generation.

After loading the model, I always run a quick summary to see the total number of trainable parameters, which typically sits around 124 million for the base version.

Implement LoRA Layers in Keras for GPT-2

This is where the magic happens; instead of training the whole model, I inject low-rank matrices into the existing dense layers of the GPT-2 Transformer blocks.

I have found that setting a rank of 4 or 8 is usually sufficient to capture new task-specific information without bloating the model’s memory usage.

# Define LoRA configuration

# We target the query and value projections in the attention layers

lora_rank = 4

# Enable LoRA on the model

gpt2_lm.backbone.enable_lora(rank=lora_rank)

# Check the new parameter count

gpt2_lm.summary()In my testing, enabling LoRA reduces the number of trainable parameters by over 99%, often leaving only a few hundred thousand weights to be updated.

I notice a significant speedup in the training loop immediately after this step, as the optimizer no longer needs to calculate gradients for the massive base backbone.

Prepare a USA-Centric Dataset for Fine-Tuning

To make the model more relevant for a USA-based audience, I like to use datasets that reflect local culture, such as summaries of popular National Parks or American history.

For this example, I am creating a small synthetic dataset of descriptions related to famous landmarks in the United States to teach the model a specific “tourist guide” tone.

# Sample data focused on US Landmarks

us_travel_data = [

"The Grand Canyon in Arizona offers breathtaking views of red rock formations.",

"The Statue of Liberty in New York Harbor is a symbol of freedom and democracy.",

"Yellowstone National Park is famous for its geothermal features and Old Faithful geyser.",

"The Golden Gate Bridge in San Francisco is an iconic suspension bridge in California.",

"Mount Rushmore in South Dakota features the faces of four US presidents carved in granite.",

"The Everglades in Florida is a massive wetland home to diverse wildlife like alligators."

]

# Formatting the data for the Causal LM

train_ds = tf.data.Dataset.from_tensor_slices(us_travel_data).batch(2)I have learned that cleaning the text and removing excessive whitespace helps the GPT-2 tokenizer produce more coherent tokens for the model to process.

Using tf.data.Dataset allows me to efficiently stream the text into the Keras model during the training phase, which is vital for larger-scale projects.

Configure the Keras Optimizer for LoRA Training

When fine-tuning with LoRA, I prefer using the AdamW optimizer because the weight decay helps prevent the small number of trainable parameters from over-fitting.

I typically set a slightly higher learning rate than I would for full fine-tuning, as we are only training the adapter layers and need them to converge quickly.

# Configure the optimizer and loss function

learning_rate = 5e-4

optimizer = keras.optimizers.AdamW(

learning_rate=learning_rate,

weight_decay=0.01

)

# Compile the model

gpt2_lm.compile(

optimizer=optimizer,

loss=keras.losses.SparseCategoricalCrossentropy(from_logits=True),

weighted_metrics=["accuracy"]

)I find that using SparseCategoricalCrossentropy with from_logits=True is the most stable approach for causal language modeling in Keras.

The accuracy metric gives me a quick way to monitor whether the model is actually learning the patterns in my USA-centric landmark dataset during training.

Execute the Keras Fine-Tuning Loop

With the model compiled and the data ready, I start the training process, usually keeping the number of epochs low to avoid “catastrophic forgetting” of the base knowledge.

In my experience, even 10 to 20 epochs on a small specialized dataset can dramatically shift the model’s output style toward the desired domain.

# Fine-tune the model on the US travel data

gpt2_lm.fit(train_ds, epochs=10)

print("Fine-tuning complete!")I closely watch the loss curve; if it drops too sharply, I might reduce the learning rate to ensure the LoRA adapters are capturing nuances rather than just memorizing strings.

The beauty of this method is that the training finishes in a fraction of the time it would take to update the standard GPT-2 layers.

Generate Text Post-Fine-Tuning in Keras

After the training is done, I test the model by providing a prompt about a US city to see if it has adopted the descriptive tone from my dataset.

I use the generate method, which handles the tokenization of the input string and the sampling of the output tokens automatically for me.

# Generate a description of a US location

prompt = "The Liberty Bell in Philadelphia"

output = gpt2_lm.generate(prompt, max_length=50)

print(f"Generated Text:\n{output}")I am always impressed by how the model blends its original pre-trained knowledge with the new context provided during the LoRA fine-tuning session.

If the output is too repetitive, I often experiment with different sampling strategies like Top-K or Nucleus sampling to get more creative descriptions.

Save and Reusing LoRA Weights in Keras

One of my favorite things about LoRA is that I only need to save the tiny adapter weights rather than a whole new 500MB model file for every task.

I use the Keras saving utilities to export these weights, making it easy to swap different “personalities” into the same base GPT-2 model later.

# Save only the trainable LoRA weights

gpt2_lm.save_weights("gpt2_lora_us_travel.weights.h5")

# To reload later, load the base model first, enable lora, then load weights

# gpt2_lm.load_weights("gpt2_lora_us_travel.weights.h5")I executed the above example code and added the screenshot below.

This modularity is a lifesaver when I am working on multiple client projects that require different specialized language models on the same server.

I have found that keeping a library of these small-weight files allows for very agile deployment of AI features in my Python applications.

Fine-tuning GPT-2 with LoRA in Keras is a game-changer for anyone who wants to build custom AI without a massive budget. It makes the process of adapting Large Language Models much more accessible and efficient for individual developers.

You may also like to read:

- Semantic Similarity with BERT in Python Keras

- Sentence Embeddings with Siamese RoBERTa-Networks in Keras

- Implement End-to-End Masked Language Modeling with BERT in Keras

- Abstractive Text Summarization with BART using Python Keras

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.