Working with structured data in deep learning used to feel like a constant battle with preprocessing pipelines. I remember spending hours manually mapping integers and scaling floats before even touching a model.

That changed when I started using the Keras FeatureSpace utility. It simplifies the entire process of mapping raw tabular data into a format that a neural network can actually understand.

In this tutorial, I will walk you through how I handle structured data classification using FeatureSpace. We will use a practical dataset involving US health insurance parameters to predict medical costs.

Set Up Your Keras Environment for FeatureSpace

Before we dive into the data, I always ensure my environment is updated to use the latest Keras 3 features. Having the right dependencies installed prevents those annoying compatibility errors mid-project.

import os

os.environ["KERAS_BACKEND"] = "tensorflow"

import keras

from keras import layers

import pandas as pd

import tensorflow as tf

print(f"Current Keras version: {keras.__version__}")I prefer setting the backend explicitly to TensorFlow to ensure all preprocessing layers integrate smoothly. This setup is the foundation for every structured data project I build in Python.

Load US Health Insurance Data for Keras Classification

I often use the US Health Insurance dataset because it contains a perfect mix of categorical and numerical features. It represents real-world scenarios like age, BMI, and regional data from different US states.

file_url = "https://gist.githubusercontent.com/m0n0p0l1/8534e3868038965f57388703a556396b/raw/insurance.csv"

dataframe = pd.read_csv(file_url)

# Convert charges to a binary classification: High Cost vs Low Cost

dataframe["target"] = (dataframe["charges"] > 15000).astype(int)

dataframe = dataframe.drop(columns=["charges"])

print(dataframe.head())Loading the data into a Pandas DataFrame allows me to quickly inspect the distribution of features. I converted the target variable into a binary format to make this a clear classification task for our model.

Create a Keras Dataset for FeatureSpace Processing

Once the data is in memory, I convert the DataFrame into a tf.data.Dataset object. This is a crucial step because Keras FeatureSpace is designed to work efficiently with asynchronous data input.

def dataframe_to_dataset(dataframe):

dataframe = dataframe.copy()

labels = dataframe.pop("target")

ds = tf.data.Dataset.from_tensor_slices((dict(dataframe), labels))

ds = ds.shuffle(buffer_size=len(dataframe))

return ds

val_dataframe = dataframe.sample(frac=0.2, random_state=1337)

train_dataframe = dataframe.drop(val_dataframe.index)

train_ds = dataframe_to_dataset(train_dataframe).batch(32)

val_ds = dataframe_to_dataset(val_dataframe).batch(32)I always split my data into training and validation sets early to avoid any data leakage. Batching the dataset ensures that our Keras model trains efficiently without overloading the system memory.

Configure FeatureSpace for Keras Feature Encoding

This is where the magic happens; I define the FeatureSpace object to tell Keras how to treat every column. I can specify which columns are floating-point numbers and which ones are strings that need indexing.

feature_space = keras.utils.FeatureSpace(

features={

"age": "float_normalized",

"bmi": "float_normalized",

"children": "integer_categorical",

"sex": "string_categorical",

"smoker": "string_categorical",

"region": "string_categorical",

},

output_mode="concat",

)By using float_normalized, I let Keras handle the mean and variance scaling automatically during the adaptation phase. The string_categorical option is a lifesaver for handling text labels like “southwest” or “northwest” without manual encoding.

Adapt FeatureSpace to Training Data in Keras

The FeatureSpace utility needs to “look” at the data to calculate the vocabulary for categories and the mean/std for numbers. I use the adapt method on my training dataset to finalize these transformations.

# We remove the label for the adaptation step

train_ds_no_labels = train_ds.map(lambda x, y: x)

feature_space.adapt(train_ds_no_labels)This step is essentially the training phase for your preprocessing pipeline. Once adapted, the FeatureSpace is a locked-in transformer that ensures your validation data is scaled exactly like your training data.

Build the Keras Classification Model Architecture

Now that the features are defined, I create the model by using the FeatureSpace as the input layer. I typically use a few dense layers with ReLU activation for structured data classification.

dict_inputs = feature_space.get_inputs()

encoded_features = feature_space(dict_inputs)

x = layers.Dense(32, activation="relu")(encoded_features)

x = layers.Dropout(0.5)(x)

x = layers.Dense(16, activation="relu")(x)

output = layers.Dense(1, activation="sigmoid")(x)

model = keras.Model(inputs=dict_inputs, outputs=output)

model.compile(optimizer="adam", loss="binary_crossentropy", metrics=["accuracy"])Integrating the dict_inputs directly into the model allows the network to accept raw dictionary data. This means I don’t have to manually preprocess data ever again when making future predictions.

Train the Keras Structured Data Classifier

With the model compiled, I kick off the training process using the training and validation datasets. I find that 20 to 30 epochs are usually enough for small structured datasets like this insurance one.

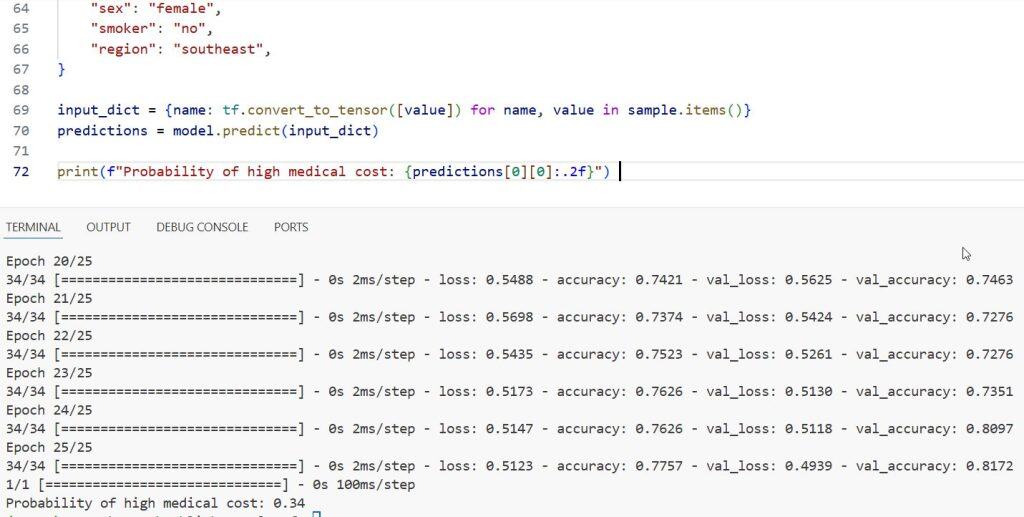

model.fit(train_ds, epochs=25, validation_data=val_ds)During training, I keep a close eye on the validation accuracy to ensure the model isn’t overfitting on the specific US regions. The dropout layer I added earlier helps the model generalize much better across different demographics.

Predict New Data Points with Keras FeatureSpace

The best part about this workflow is that I can pass a raw Python dictionary directly to the model. There is no need to call any separate scaling or encoding functions before running a prediction.

sample = {

"age": 45,

"bmi": 28.5,

"children": 2,

"sex": "female",

"smoker": "no",

"region": "southeast",

}

input_dict = {name: tf.convert_to_tensor([value]) for name, value in sample.items()}

predictions = model.predict(input_dict)

print(f"Probability of high medical cost: {predictions[0][0]:.2f}")You can see the output in the screenshot below.

This streamlined prediction process is what makes FeatureSpace so valuable for production environments. It encapsulates the entire logic from raw input to final classification inside a single Keras object.

In this tutorial, I showed you how to use Keras FeatureSpace to handle structured data effortlessly. You saw how to load data, encode various feature types, and build a model that accepts raw inputs.

Using this approach has saved me countless hours on data cleaning and preprocessing pipelines. I highly recommend trying this out on your next tabular data project to see how much it simplifies your code.

If you found this guide helpful, you might also want to explore more about Keras preprocessing layers. There are many ways to customize these steps for even more complex datasets.

You may also like to read:

- Sentence Embeddings with Siamese RoBERTa-Networks in Keras

- Implement End-to-End Masked Language Modeling with BERT in Keras

- Abstractive Text Summarization with BART using Python Keras

- Parameter-Efficient Fine-Tuning of GPT-2 with LoRA in Keras

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.