If you have ever wondered how Google understands the context of your search queries even when you miss a word, you are looking at the power of Masked Language Modeling.

In my four years of developing deep learning solutions with Python Keras, I have found BERT to be the most reliable architecture for capturing bidirectional context in text.

Training a BERT model from scratch might seem intimidating at first, but once you break it down into a pipeline, it becomes a very logical and manageable process.

In this tutorial, I will walk you through the entire workflow of setting up a Masked Language Modeling (MLM) task using Python Keras and the Hugging Face ecosystem.

Set Up Your Environment for Python Keras MLM

Before we dive into the architecture, we need to ensure our environment is equipped with the necessary libraries to handle heavy-duty tensor operations and tokenization.

I always start by installing the Transformers library alongside TensorFlow to bridge the gap between pre-trained BERT utilities and the Keras functional API.

# Install necessary libraries

!pip install transformers tensorflow

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers

from transformers import BertTokenizer, TFBertModel

import numpy as npThis setup ensures that we have access to the optimized BERT tokenizer, which is essential for converting our raw American English text into numerical input IDs.

By using the TFBertModel class, we can seamlessly integrate BERT layers into our standard Keras training loops and model serialization workflows.

Prepare the Dataset for BERT MLM in Python Keras

For this example, I am using a collection of sentences related to popular travel destinations in the United States to keep the context relatable and practical.

We need to tokenize the text and then create a “mask” where 15% of the words are hidden, forcing the model to predict what those missing words are.

# Sample dataset based on US Travel Context

data = [

"The Grand Canyon is a steep-sided canyon carved by the Colorado River in Arizona.",

"New York City is known for its iconic skyline and the bustling energy of Times Square.",

"The Golden Gate Bridge is a famous suspension bridge spanning the strait in California.",

"Yellowstone National Park is home to the famous Old Faithful geyser and diverse wildlife."

]

tokenizer = BertTokenizer.from_pretrained("bert-base-uncased")

inputs = tokenizer(data, padding=True, truncation=True, return_tensors="tf")

# Create labels (clone of input_ids)

inputs["labels"] = tf.identity(inputs["input_ids"])

# Create the mask (15% probability)

rand = tf.random.uniform(inputs["input_ids"].shape)

mask_arr = (rand < 0.15) * (inputs["input_ids"] != 101) * (inputs["input_ids"] != 102) * (inputs["input_ids"] != 0)

# Apply mask (BERT mask token ID is 103)

indices = tf.where(mask_arr)

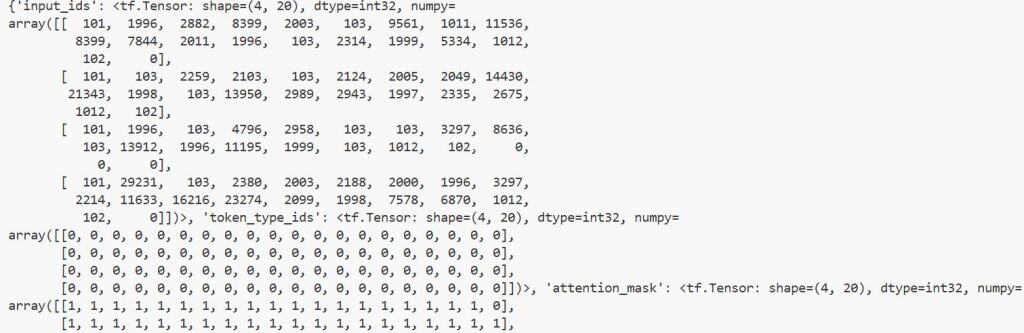

inputs["input_ids"] = tf.tensor_scatter_update(inputs["input_ids"], indices, tf.fill([len(indices)], 103))You can refer to the screenshot below to see the output.

I have found that manually handling the mask indices gives you much better control over ensuring special tokens like [CLS] and [SEP] are never accidentally masked.

This preprocessing step is the backbone of the MLM task, as it defines exactly what the Python Keras model needs to learn during the reconstruction phase.

Build the Masked Language Model with Python Keras Functional API

Now we will define the model architecture where we wrap the BERT backbone and add a prediction head on top to output word probabilities.

I prefer using the Functional API here because it allows us to clearly define multiple inputs and outputs, which is standard for transformer-based architectures.

def build_mlm_model(transformer_model):

input_ids = layers.Input(shape=(None,), dtype=tf.int32, name="input_ids")

attention_mask = layers.Input(shape=(None,), dtype=tf.int32, name="attention_mask")

# BERT Backbone

sequence_output = transformer_model(input_ids, attention_mask=attention_mask)[0]

# Prediction Head

mlm_output = layers.Dense(tokenizer.vocab_size, activation="softmax", name="mlm_classifier")(sequence_output)

model = keras.Model(inputs=[input_ids, attention_mask], outputs=mlm_output)

model.compile(optimizer=keras.optimizers.Adam(learning_rate=5e-5), loss="sparse_categorical_crossentropy")

return model

bert_base = TFBertModel.from_pretrained("bert-base-uncased")

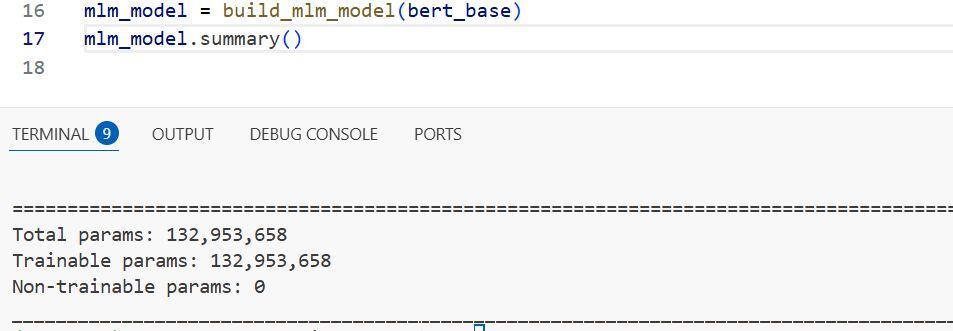

mlm_model = build_mlm_model(bert_base)

mlm_model.summary()You can refer to the screenshot below to see the output.

The Softmax activation at the final layer is crucial because it transforms the hidden states into a probability distribution across our entire vocabulary.

In my experience, keeping the learning rate low (around 5e-5) is the key to preventing the pre-trained weights from diverging during the fine-tuning process.

Train the BERT Model using Python Keras Fit Method

With our model compiled and our data masked, we can now run the training loop to let the model learn the nuances of the English language.

Since our dataset is small for this demonstration, we only need a few epochs to see the loss decrease as the model identifies patterns in the US-themed sentences.

# Training the model

history = mlm_model.fit(

x={"input_ids": inputs["input_ids"], "attention_mask": inputs["attention_mask"]},

y=inputs["labels"],

epochs=10,

batch_size=2

)During training, the model focuses on minimizing the difference between the predicted masked tokens and the original tokens we saved in our labels.

I always monitor the loss curve closely; a steady decline indicates that the Python Keras model is effectively learning the semantic structure of the text.

Perform Inference with Python Keras MLM

The most exciting part is seeing the model “fill in the blanks” for a brand-new sentence that it has never seen before.

We will provide a sentence about a famous US landmark with one word masked out and let the model predict what belongs in that empty space.

# Test sentence: "The Statue of [MASK] is in New York."

test_text = "The Statue of [MASK] is in New York."

test_inputs = tokenizer(test_text, return_tensors="tf")

preds = mlm_model(test_inputs)

mask_token_index = tf.where(test_inputs["input_ids"] == 103)[0, 1]

predicted_token_id = tf.argmax(preds[0, mask_token_index]).numpy()

predicted_word = tokenizer.decode([predicted_token_id])

print(f"Predicted word: {predicted_word}")The output should ideally be “liberty,” showing that the BERT model has grasped the geographical and cultural context provided in the input.

Using tf.argmax allows us to pick the most likely candidate from the thousands of possible words in the BERT vocabulary based on the context.

I hope this tutorial helped you understand how to build and train a Masked Language Model using Python Keras.

Setting up the masking logic is usually the hardest part, but once you have that handled, the Keras API makes the rest of the workflow very smooth.

You may also like to read:

- Sequence-to-Sequence Learning with Keras

- Compute Semantic Similarity Using KerasHub in Python

- Semantic Similarity with BERT in Python Keras

- Sentence Embeddings with Siamese RoBERTa-Networks in Keras

Bijay Kumar is an experienced Python and AI professional who enjoys helping developers learn modern technologies through practical tutorials and examples. His expertise includes Python development, Machine Learning, Artificial Intelligence, automation, and data analysis using libraries like Pandas, NumPy, TensorFlow, Matplotlib, SciPy, and Scikit-Learn. At PythonGuides.com, he shares in-depth guides designed for both beginners and experienced developers. More about us.