Image Classification Using Keras Forward-Forward Algorithm

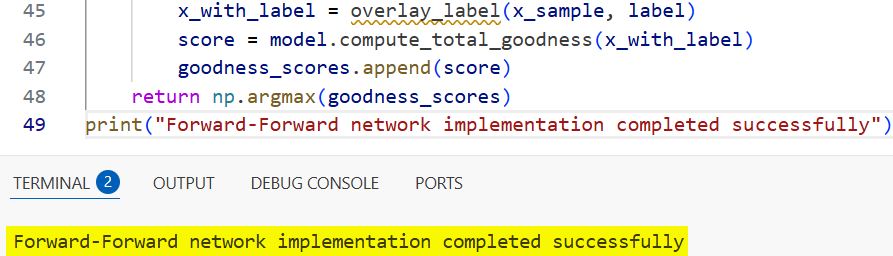

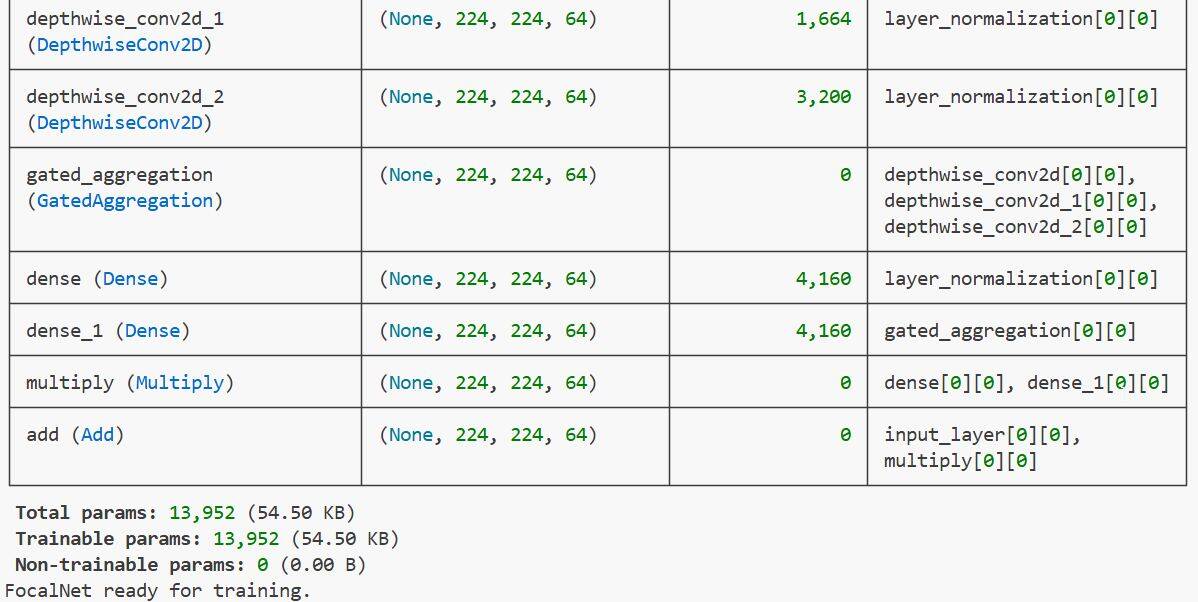

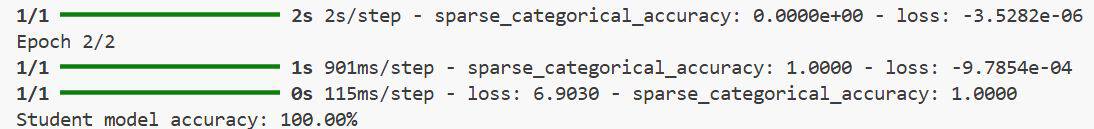

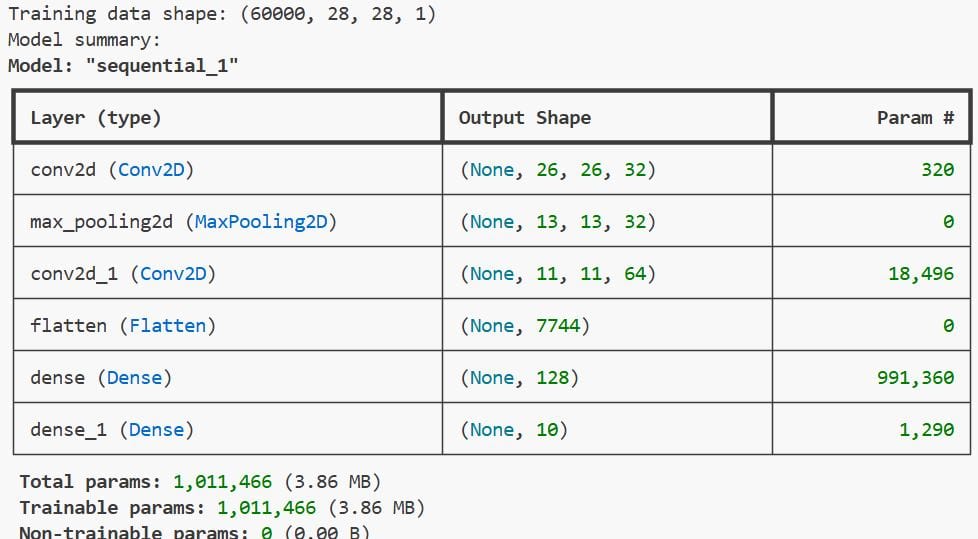

Over my four years as a Keras developer, I have spent countless hours debugging backpropagation gradients. It is often frustrating when gradients vanish or explode during deep network training. Recently, I started experimenting with Geoffrey Hinton’s Forward-Forward (FF) algorithm as a powerful alternative. It replaces the traditional backward pass with two forward passes, one with … Read more >>